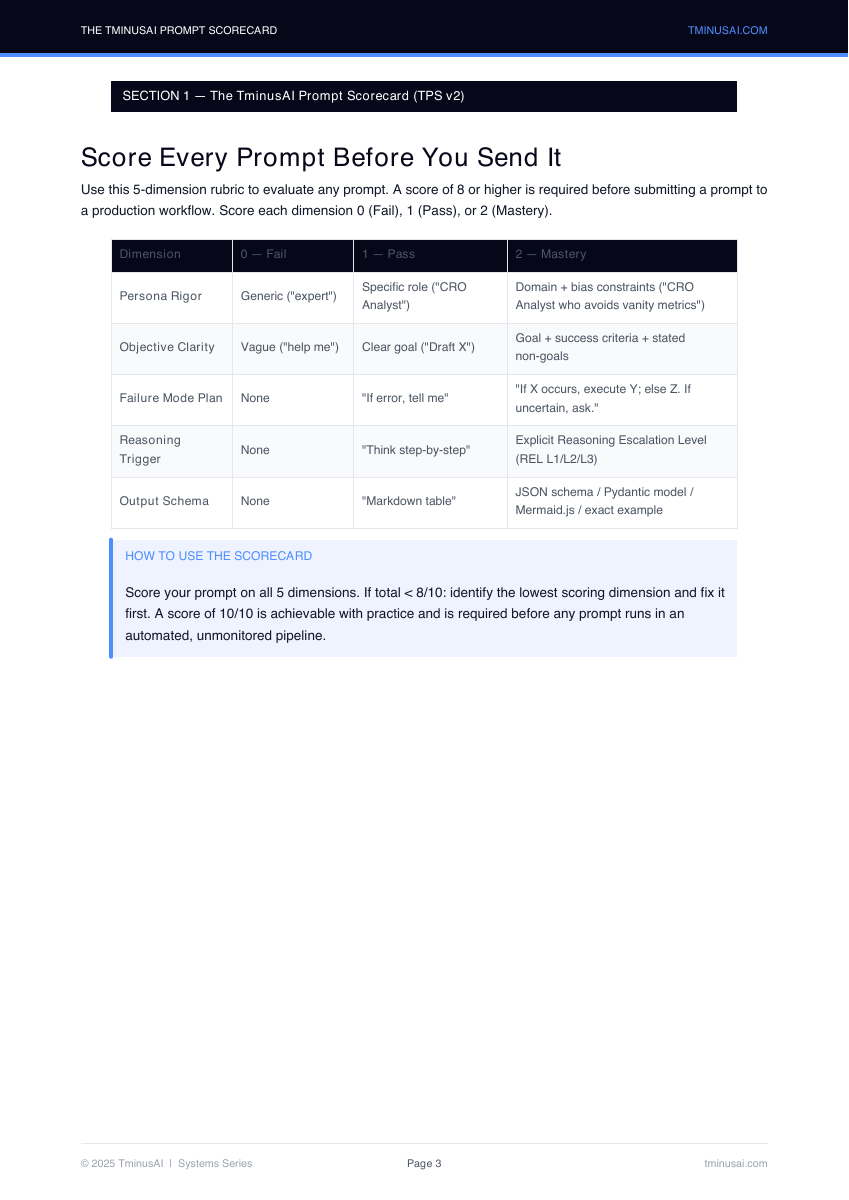

Score prompts before they hit production

Uses five dimensions: persona rigor, objective clarity, failure-mode planning, reasoning trigger, and output schema.

- Spot weak prompts before they generate expensive noise

- Push every prompt toward explicit constraints and executable output formats